The idea of data-based echo in chipmusic is very common, of course, whether this is via a single channel (voice) or two or more voices. In all cases, data is copied and repeated at a later time (often a rhythmically relevant length longer than around 80 ms), creating a richer sound for a given section, track, phrase or instrument.

An extension of this idea is the creation of artificial, data-based reverberation. Naturally, this process uses up more resources, both in terms of computing power (more musical events must be synchronised and timed correctly) but also in terms of voicing and channels (the more channels, the fuller and more realistic the reverberation may sound).

The YM2413 chip is the perfect candidate for experimentation with artificial, data-driven reverberation, as it has quite a number of channels (9 x FM channels) for a chip of the era.

The way that this reverberation is created is very straightforward. The same line is repeated across all nine channels. For each subsequent channel, a short delay is added (in this case 35ms per channel) and a certain amount of volume is subtracted (in this case, each subsequent channel has a volume data byte that was 1/16th lower than the previous).

The outcome is relatively convincing, considering that it is only a data-driven application of reverberation. Listen below.

YM2413 - simple example of a virtual space - no space (dry)

YM2413 - simple example of a virtual space - space (wet)

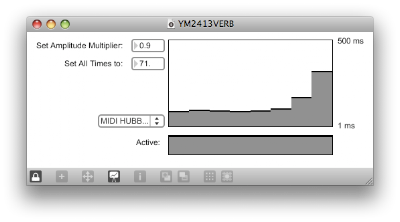

I've made a simple Max/MSP patch that allows the user to simply pass MIDI data to it in the form of a monophonic, single channel note stream, which then copies the data to multiple channels with a predefined amplitude multiplier and cascaded delay. The result is easy data-based reverberation for the YM2413.